1. Introduction

My homelab is a self-hosted environment built for continuous learning, experimentation, and automation.

It’s the foundation for all projects showcased in this portfolio — from containerized applications to GitOps-managed Kubernetes clusters.

2. Hardware

The homelab runs a mix of repurposed and modern hardware, clustered with Proxmox to host Docker and Kubernetes workloads.

Core Nodes

- PC1: Intel 7100U, 4 GB RAM, 128 GB SanDisk SSD (SATA)

- PC2: Intel 8500T, 16 GB RAM, 256 GB SSD (NVMe)

- PC3: AMD Ryzen 9 5900X, 64 GB RAM, 2 TB SSD (NVMe), RTX 3060 LHR

Storage

- MyBook Duo: 2 × 10 TB drives in RAID 1

Networking

- Incoming Firewall: Intel N100 i226-V Fanless Mini PC (6× 2.5G)

- Router: TP-Link Archer AX55 (Wi-Fi 6)

- Switch: TP-Link TL-SG108E (VLAN-capable)

- USB NICs: TP-Link UE306

Management Device

- MacBook Pro (2021): M1 Max, 32 GB RAM and 1TB SSD

The setup serves as a platform for testing, learning, and documenting modern DevOps and GitOps practices.

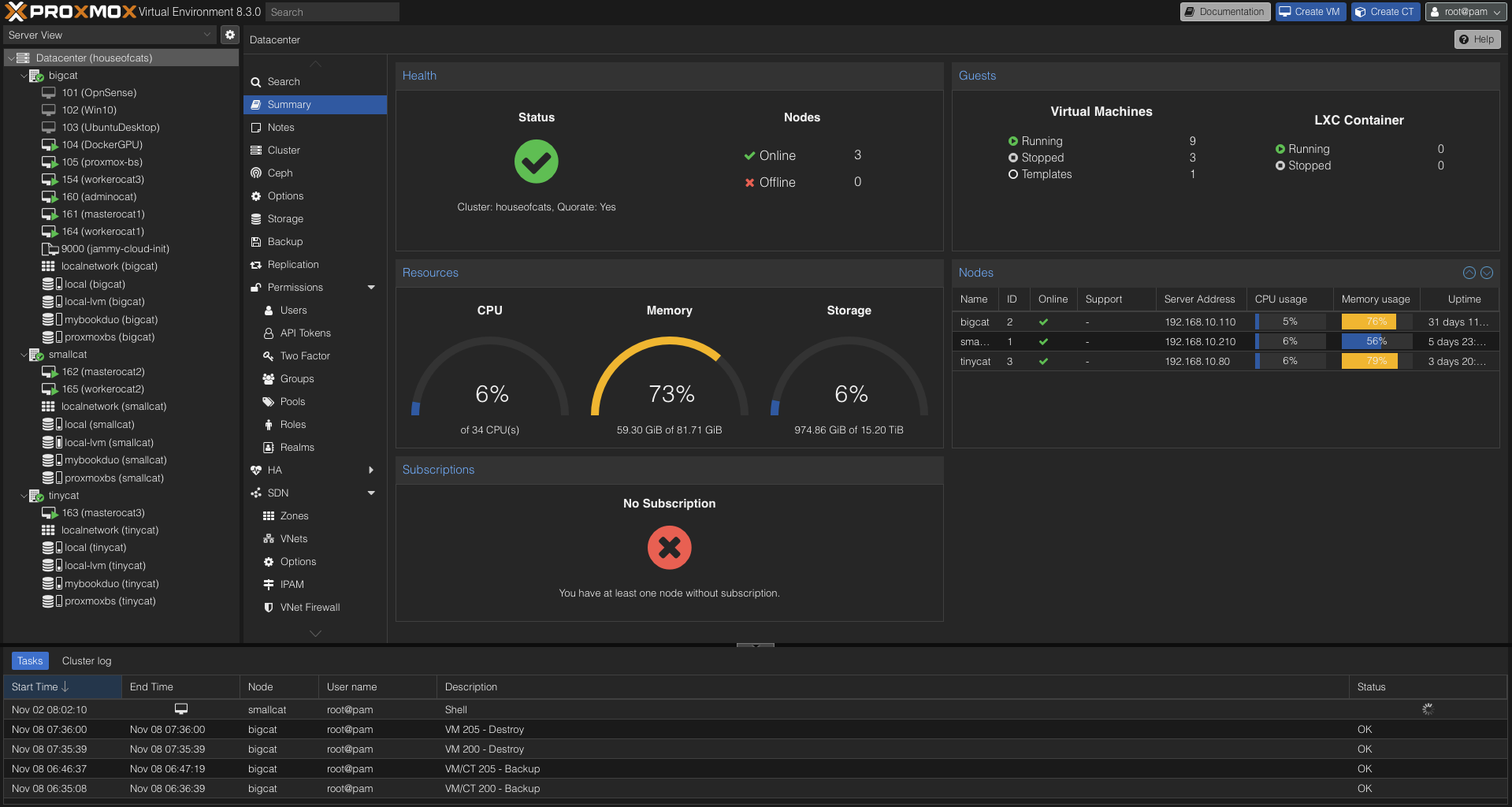

3. Proxmox Virtualization Layer

The homelab is orchestrated through Proxmox VE, which provides virtualization, backup management, and VM resource isolation across all nodes.

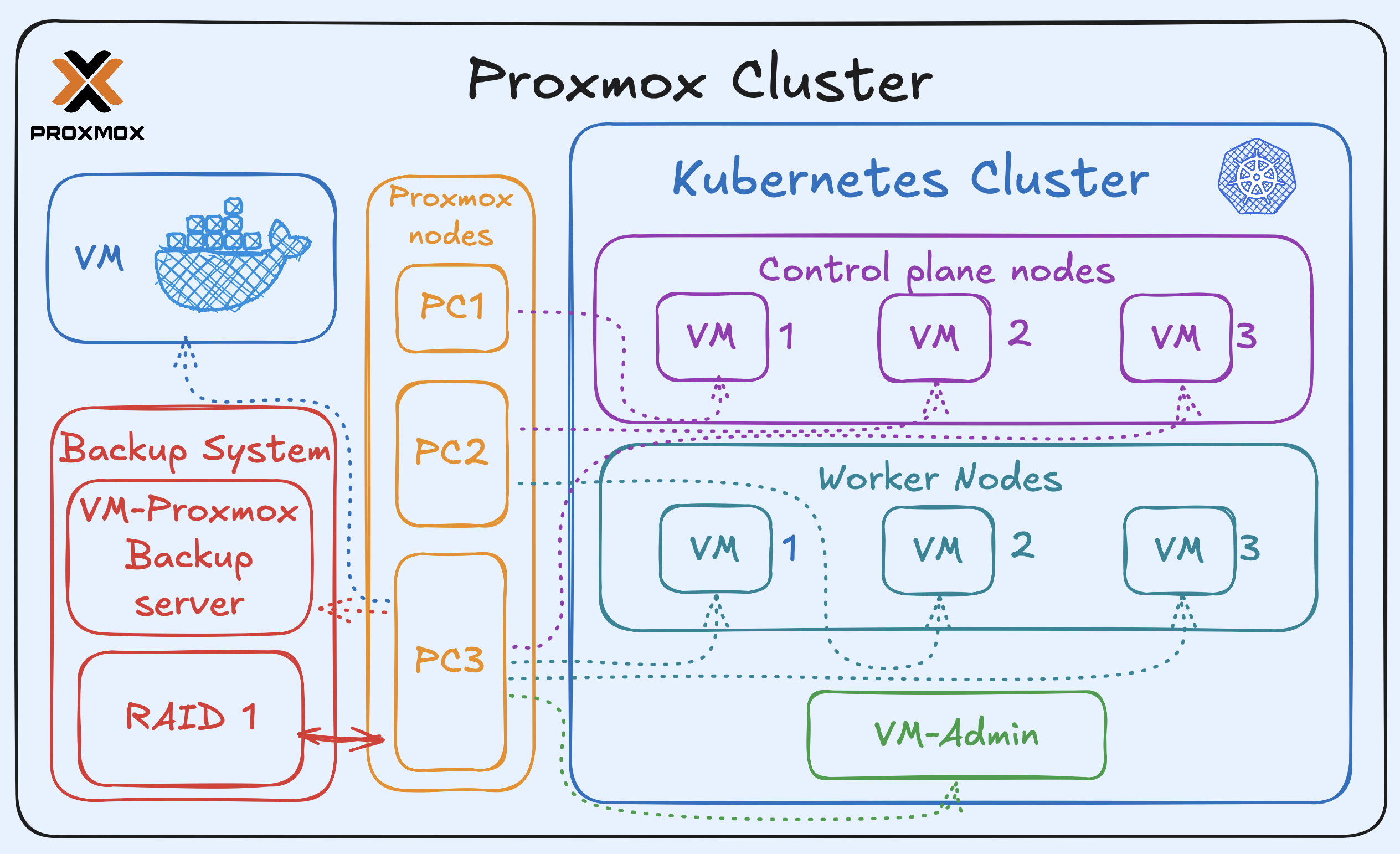

Kubernetes Cluster

Each Proxmox node hosts one or more virtual machines (VMs) that form the Kubernetes cluster.

- Every node runs one control-plane VM, ensuring high availability across the three-node setup.

- Two worker nodes run on the higher-resource Proxmox host, while the third worker runs on the lower-resource one.

- The most powerful node also runs an additional admin VM, used to manage and monitor the Kubernetes cluster.

Backup System

The RAID 1 array is physically connected to the most powerful Proxmox host, which runs a dedicated Proxmox Backup Server VM.

All VMs from the Proxmox cluster are periodically backed up here to ensure consistent and reliable recovery.

Docker VM

In addition, the same host runs a Docker VM, used for containerized applications such as PiHole, Homarr, MinIO, and more — this setup is detailed in the next section.

The following diagram summarizes the relationship between the Proxmox layer, Kubernetes cluster, and backup system.

4. Docker Environment

The homelab journey began with Docker Compose, serving as the initial foundation for experimentation and container management before transitioning to Kubernetes.

All applications run inside a dedicated Docker VM hosted on Proxmox, as described earlier, providing an isolated environment for lightweight services and monitoring tools.

The current Docker stack includes several core services that remain actively used today:

- Pi-hole – DNS-level ad blocking and internal network DNS resolution

- Traefik – Reverse proxy and automatic SSL termination for Docker services

- MinIO – S3-compatible object storage for encrypted local backups and test workloads

- Homarr – Central dashboard providing unified access to all self-hosted services

- WG-Easy – Web-based interface for managing WireGuard VPN peers

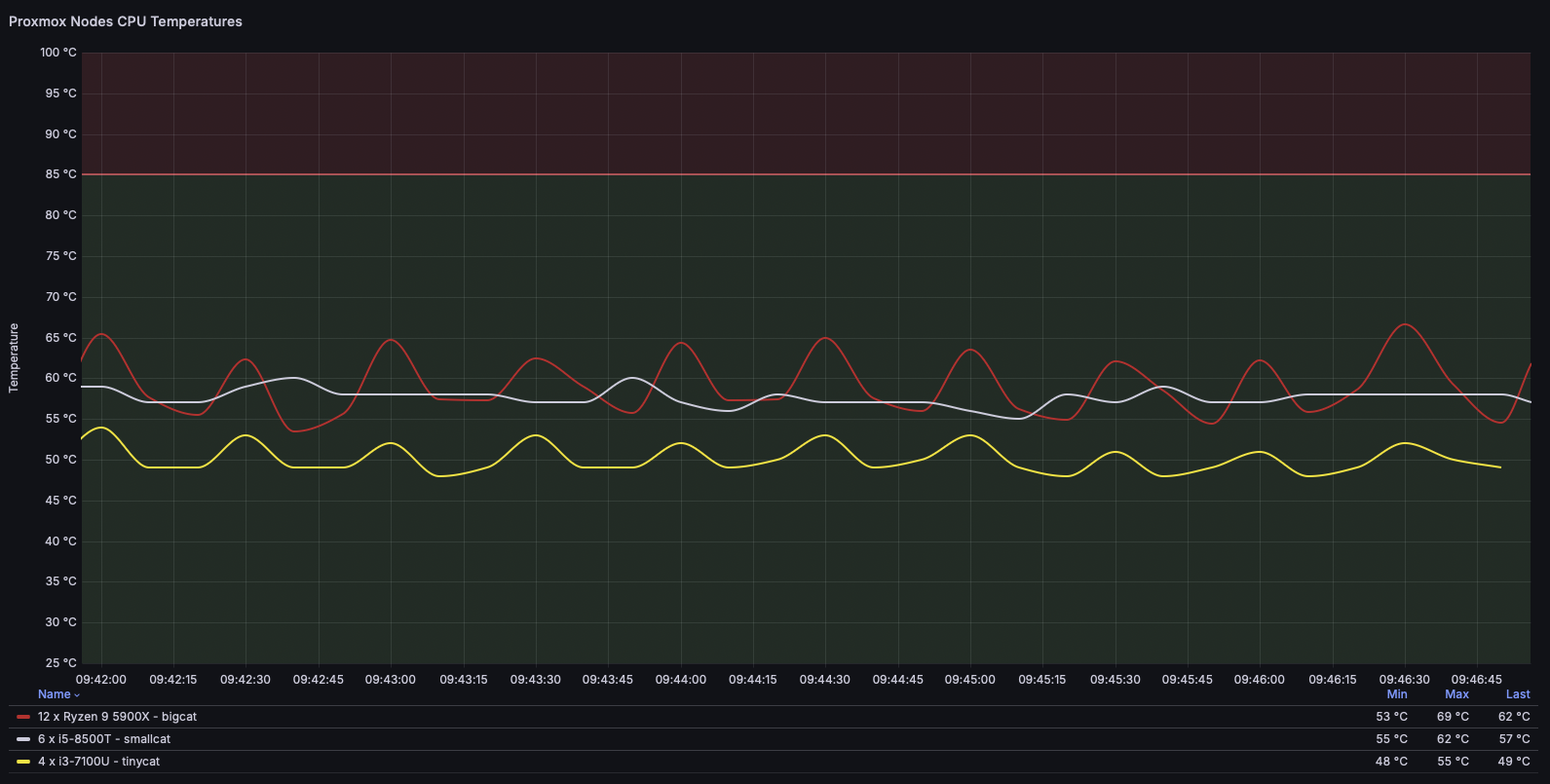

For observability, Grafana and InfluxDB collect CPU temperature metrics from all Proxmox nodes.

The data is visualized in the Homarr dashboard, providing a quick overview of system thermal performance.

The Docker VM represents the transition from my early containerization experiments to the current GitOps-driven Kubernetes cluster, and continues to host essential lightweight services.

5. Kubernetes Environment

The Kubernetes environment represents the current phase of my homelab’s evolution —

a highly-available K3s cluster orchestrated entirely through GitOps.

It spans three control-plane nodes and three worker nodes

all provisioned as virtual machines on the Proxmox cluster, as mentioned earlier.

Cluster Bootstrapping

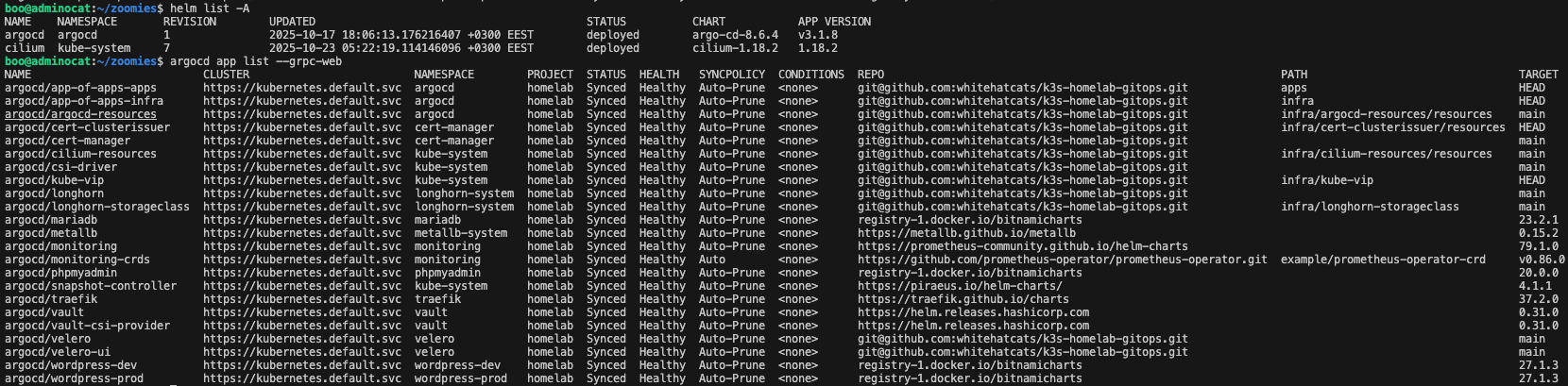

The core GitOps and networking components — Argo CD and Cilium — are installed manually via Helm during the initial cluster bootstrap to ensure full control over versioning and avoid circular dependencies.

Once deployed, Argo CD takes ownership of all subsequent cluster resources and applications through declarative GitOps synchronization.

Although both components are bootstrapped with Helm, their ongoing configuration and resource management are handled declaratively via Argo CD Applications:

- argocd-resources – manages Argo CD’s own configuration, Projects, RBAC, and Application manifests

- cilium-resources – manages Cilium-specific network policies, CRDs, and configuration objects

This structure allows the cluster to remain fully GitOps-managed, while maintaining a clear separation between Helm-controlled installations and Argo CD–managed resources.

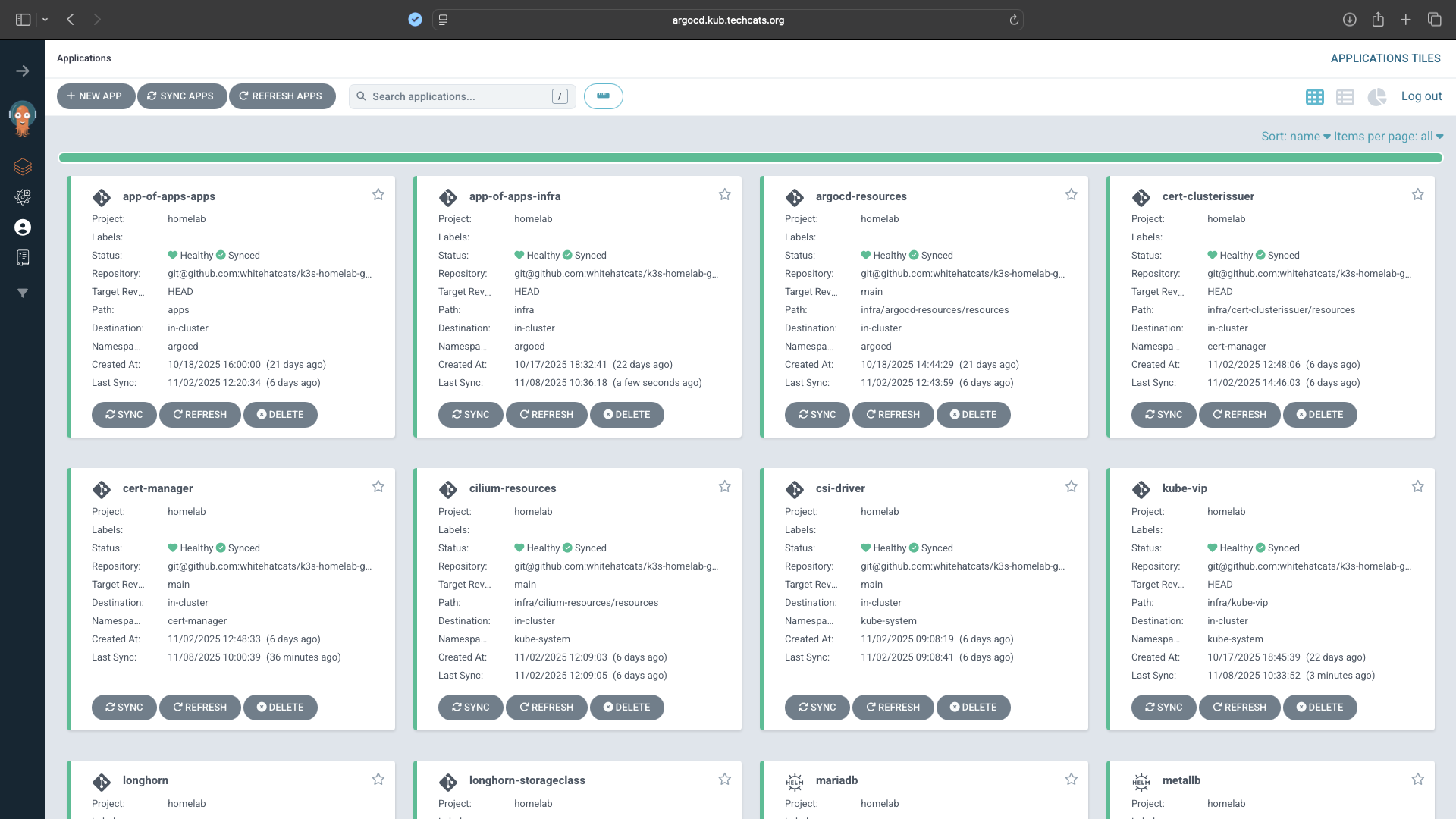

GitOps Management

After bootstrapping, all remaining components are managed declaratively by Argo CD using the App-of-Apps pattern.

Each layer of the infrastructure—networking, storage, secrets, ingress, and monitoring—is represented as a separate Argo CD Application sourced directly from Git.

The GitOps workflow is defined in the public repository:

whitehatcats/k3s-homelab-gitops

Each Application follows a dedicated namespace and project structure, ensuring isolation and simplified lifecycle management.

All are configured with Auto-Sync and Auto-Prune, guaranteeing that configuration drift is automatically corrected.

Infrastructure Components

- Cert-manager – Automates certificate issuance using Let’s Encrypt DNS-01 (Cloudflare)

- Vault + CSI Driver + Provider – Handles secrets injection directly into pods using Vault roles

- Longhorn – Provides distributed block storage with built-in replication and snapshots

- Traefik – Cluster ingress controller with Cloudflare DNS integration and automatic TLS

- MetalLB – Allocates LoadBalancer IPs from the local subnet

- Kube-VIP – Provides a virtual IP address for the control-plane API server, ensuring high availability across master nodes

- Prometheus Operator Stack – Collects and manages metrics from both cluster components and applications for observability in Grafana

- Velero – Performs backups to the local MinIO S3 target

Secrets and Certificates

Secrets are provisioned by HashiCorp Vault, integrated with the cluster via the Secrets Store CSI Driver.

This ensures that sensitive data (API tokens, S3 credentials, and TLS keys) never appear in plaintext within the Git repository.

Certificates are managed by cert-manager, which handles both public (Let’s Encrypt) and internal (Vault) issuers.

Observability and Backups

The Prometheus Operator Stack monitors cluster health, workload performance, and other telemetry.

Metrics are visualized in Grafana.

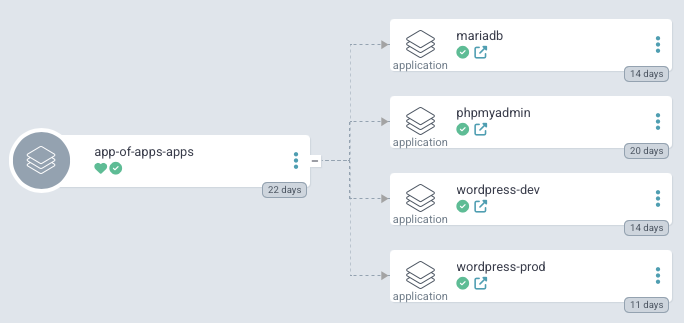

Application Example: WordPress Stack

To demonstrate a complete GitOps-managed deployment pipeline, the cluster hosts a multi-tier WordPress stack consisting of WordPress, MariaDB, and phpMyAdmin, all orchestrated through the App-of-Apps pattern.

Each component is deployed declaratively from the Git repository through individual Argo CD Applications:

- WordPress (prod/dev) – front-end web application, each environment running in its own namespace with dedicated ingress, TLS certificate, and persistent storage provided by Longhorn

- MariaDB – database backend configured using Vault-managed credentials projected via the CSI driver

- phpMyAdmin – optional administrative interface for managing the WordPress database

All resources are reconciled automatically by Argo CD, ensuring configuration drift is corrected instantly.

This structure demonstrates how stateful and stateless components can coexist within the same GitOps workflow, using environment segregation and infrastructure reusability.

The WordPress stack serves as a practical example of continuous delivery in a GitOps-driven Kubernetes cluster, showcasing how declarative infrastructure and applications align within a unified workflow.

The Kubernetes environment forms the backbone of the homelab — a self-healing, GitOps-driven platform built for experimentation, observability, and secure automation.

The entire infrastructure supports my hands-on learning and technical writing.

Selected components and workflows are documented in dedicated Posts that demonstrate applied DevOps practices and real-world problem solving.