Overview#

Cilium replaces the default K3s CNI to provide advanced network visibility and enforcement across the cluster.

It integrates Hubble for real-time Layer 3–7 flow observability and enforces identity-aware traffic rules using CiliumNetworkPolicy.

While Cilium is installed manually through Helm, all its resources — including Hubble UI, TLS certificates, and network policies — are managed declaratively via Argo CD, keeping the setup fully GitOps-driven and reproducible.

This post demonstrates how network segmentation is progressively built within the wordpress-prod namespace, starting from a default deny rule and incrementally allowing DNS, database, and frontend connectivity, all validated visually through the Hubble dashboard.

Cilium Setup#

Cilium acts as both a CNI (Container Network Interface) and a security enforcement layer, replacing traditional components like kube-proxy and flannel.

It provides identity-based network policies, deep observability with Hubble, and seamless integration with Kubernetes Services without relying on iptables.

In this setup, Cilium is deployed on the K3s cluster running inside the homelab environment, forming the foundation for all subsequent network policy enforcement and traffic monitoring.

Helm Installation#

Note:

If the K3s cluster was not initially created with the flags--flannel-backend=noneand--disable-network-policy,

the nodes must be adjusted manually before deploying Cilium.

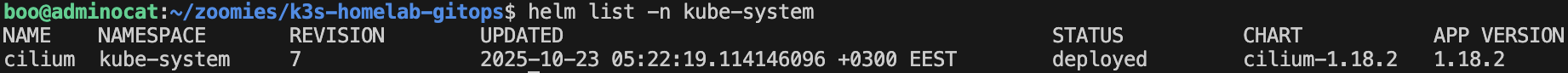

Cilium was installed in the kube-system namespace using Helm, replacing kube-proxy and enabling advanced network visibility with Hubble.

This setup provides full Layer 3–7 observability and network policy enforcement across all namespaces in the cluster.

The installation was performed with the following Helm command:

helm upgrade --install cilium cilium/cilium \

--namespace kube-system \

\

--set kubeProxyReplacement=true \

--set k8sServiceHost=192.168.10.250 \

--set k8sServicePort=6443 \

\

--set enableNodePort=true \

--set enableExternalIPs=true \

--set enableHostPort=true \

\

--set routingMode=tunnel \

--set ipam.mode=kubernetes \

--set config.enabled=true \

\

--set hubble.relay.enabled=true \

--set hubble.ui.enabled=true \

--set hubble.metrics.enabled="{dns,drop,tcp,flow,icmp,http}" \

--set hubble.ui.service.type=ClusterIP

The resulting configuration can be verified using:

helm list -n kube-systemhelm get values cilium -n kube-system

config:

enabled: true

enableExternalIPs: true

enableHostPort: true

enableNodePort: true

hubble:

metrics:

enabled:

- dns

- drop

- tcp

- flow

- icmp

- http

relay:

enabled: true

ui:

enabled: true

service:

type: ClusterIP

ipam:

mode: kubernetes

k8sServiceHost: 192.168.10.250

k8sServicePort: 6443

kubeProxyReplacement: true

routingMode: tunnel

This configuration enables:

- kubeProxyReplacement — delegates service handling and load balancing to Cilium.

- Hubble Relay and UI — exposes real-time flow metrics and visualization dashboards.

- Routing mode: tunnel — ensures encapsulated pod-to-pod communication between nodes.

- Kubernetes IPAM mode — uses Kubernetes for IP address allocation.

- L7 metrics — collects HTTP, DNS, TCP, and ICMP statistics for observability.

Once deployed, the Hubble UI was exposed through Traefik at:https://hubble.kub.techcats.org

GitOps Integration with Argo CD#

Although Cilium itself was installed using Helm, all related manifests — such as CiliumResources, Hubble configuration, and NetworkPolicies — are deployed declaratively through Argo CD.

This ensures full GitOps alignment: any change made in the repository is automatically reconciled in the cluster, maintaining configuration consistency and auditability across environments.

The relevant folder structure is shown below:

infra/

└── cilium-resources/

├── network-policies/

│ ├── wordpress-prod/

│ │ ├── cnp-allow-dns.yaml

│ │ ├── cnp-allow-mariadb.yaml

│ │ ├── cnp-allow-traefik.yaml

│ │ ├── np-default-deny-all.yaml

│ │ └── kustomization.yaml

│ └── kustomization.yaml

├── resources/

│ ├── cert-hubble.yaml

│ ├── ir-hubble.yaml

│ └── kustomization.yaml

└── app.yaml

In this layout:

- The

cilium-resourcesdirectory contains manifests for Hubble UI, certificates, and IngressRoutes. - The

network-policiesdirectory holds all CiliumNetworkPolicy definitions for application namespaces. - Both directories are tracked by Argo CD Applications, ensuring automatic synchronization between Git and the cluster.

Hubble UI Exposure#

Both the TLS certificate and IngressRoute for the Hubble dashboard are declaratively managed through Argo CD underinfra/cilium-resources/resources/.

The dashboard is securely exposed at

https://hubble.kub.techcats.org,

with certificates automatically issued by cert-manager using the Vault-integrated ClusterIssuer letsencrypt-vault-issuer.

All *.kub.techcats.org domains are resolved locally within the homelab network using Pi-hole, ensuring that Hubble remains accessible only from the internal LAN and is not publicly exposed on the internet.

Network Policies example#

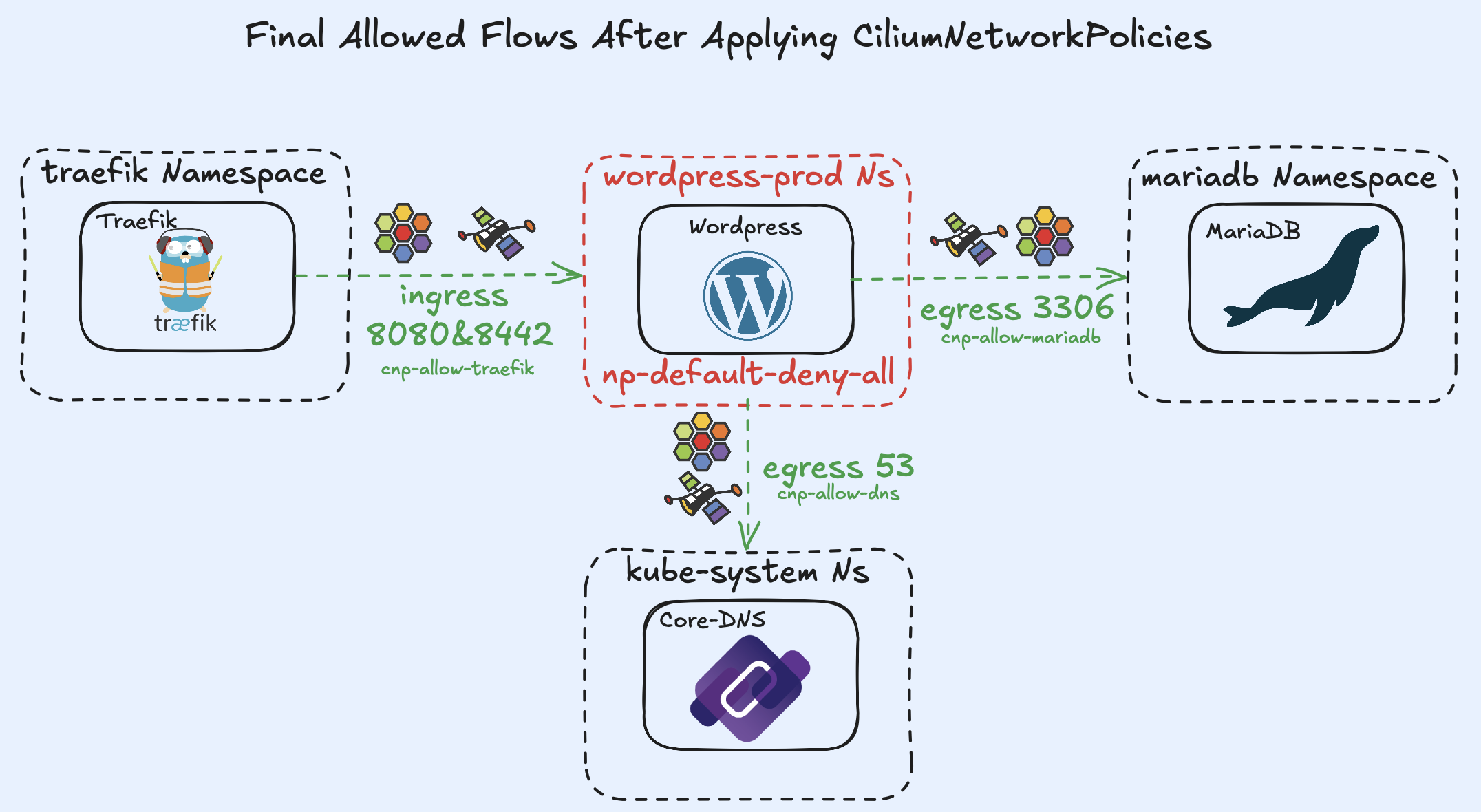

With no network policies applied, traffic flows freely within the wordpress-prod namespace.

It is considered best practice to begin by denying all ingress and egress traffic by default.

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: default-deny-all

namespace: wordpress-prod

spec:

podSelector: {}

policyTypes:

- Ingress

- Egress

After applying the default-deny-all policy, Hubble will report:

“No flows found for wordpress-prod namespace.”

This occurs because the pods can no longer reach the DNS server for name resolution.

To restore DNS functionality, a CiliumNetworkPolicy must be added to explicitly allow DNS egress.

apiVersion: cilium.io/v2

kind: CiliumNetworkPolicy

metadata:

name: allow-dns

namespace: wordpress-prod

spec:

endpointSelector: {}

egress:

- toEndpoints:

- matchLabels:

k8s:io.kubernetes.pod.namespace: kube-system

k8s:k8s-app: kube-dns

toPorts:

- ports:

- port: "53"

protocol: UDP

- port: "53"

protocol: TCP

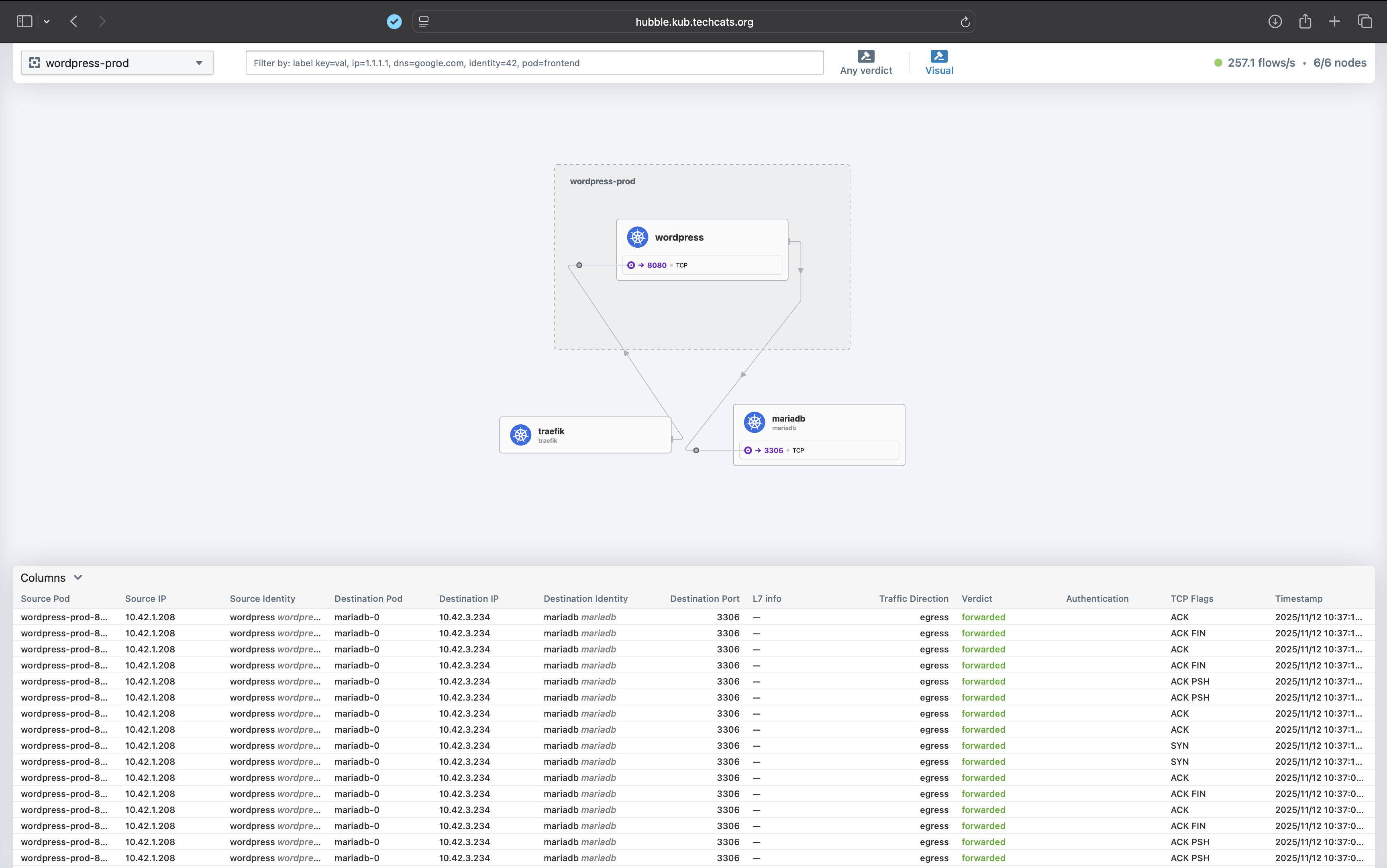

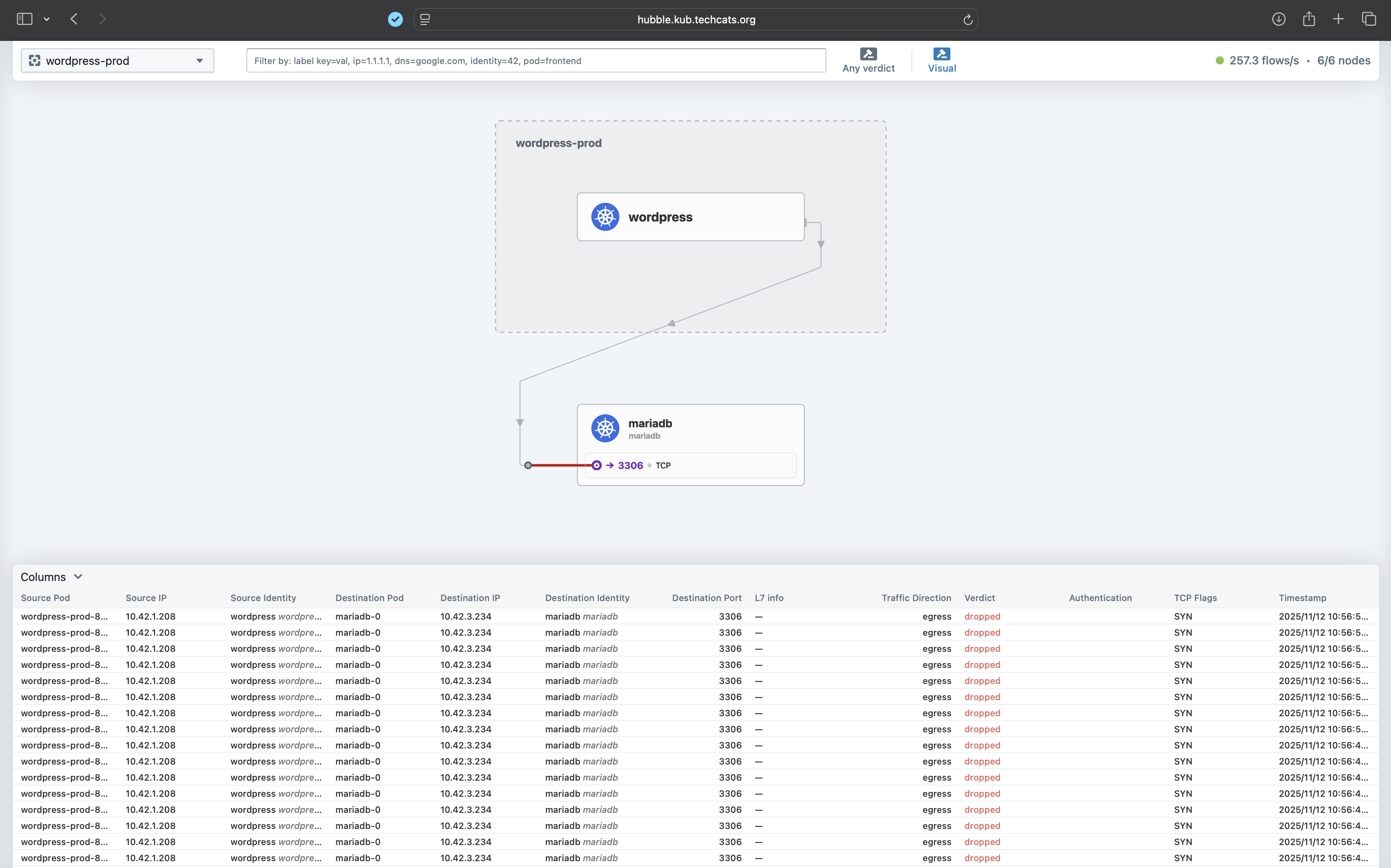

After deploying the allow-dns policy, WordPress can resolve the MariaDB service hostname, and Cilium begins detecting outbound traffic flows, visible again in the Hubble UI.

However, the packets are still dropped, since no rule currently allows connections to the MariaDB service.

To enable communication between WordPress and MariaDB, another CiliumNetworkPolicy must be defined:

apiVersion: cilium.io/v2

kind: CiliumNetworkPolicy

metadata:

name: allow-wordpress-to-mariadb

namespace: wordpress-prod

spec:

endpointSelector:

matchLabels:

app.kubernetes.io/name: wordpress

app.kubernetes.io/instance: wordpress-prod

egress:

- toEndpoints:

- matchLabels:

app.kubernetes.io/name: mariadb

app.kubernetes.io/instance: mariadb

io.kubernetes.pod.namespace: mariadb

toPorts:

- ports:

- port: "3306"

protocol: TCP

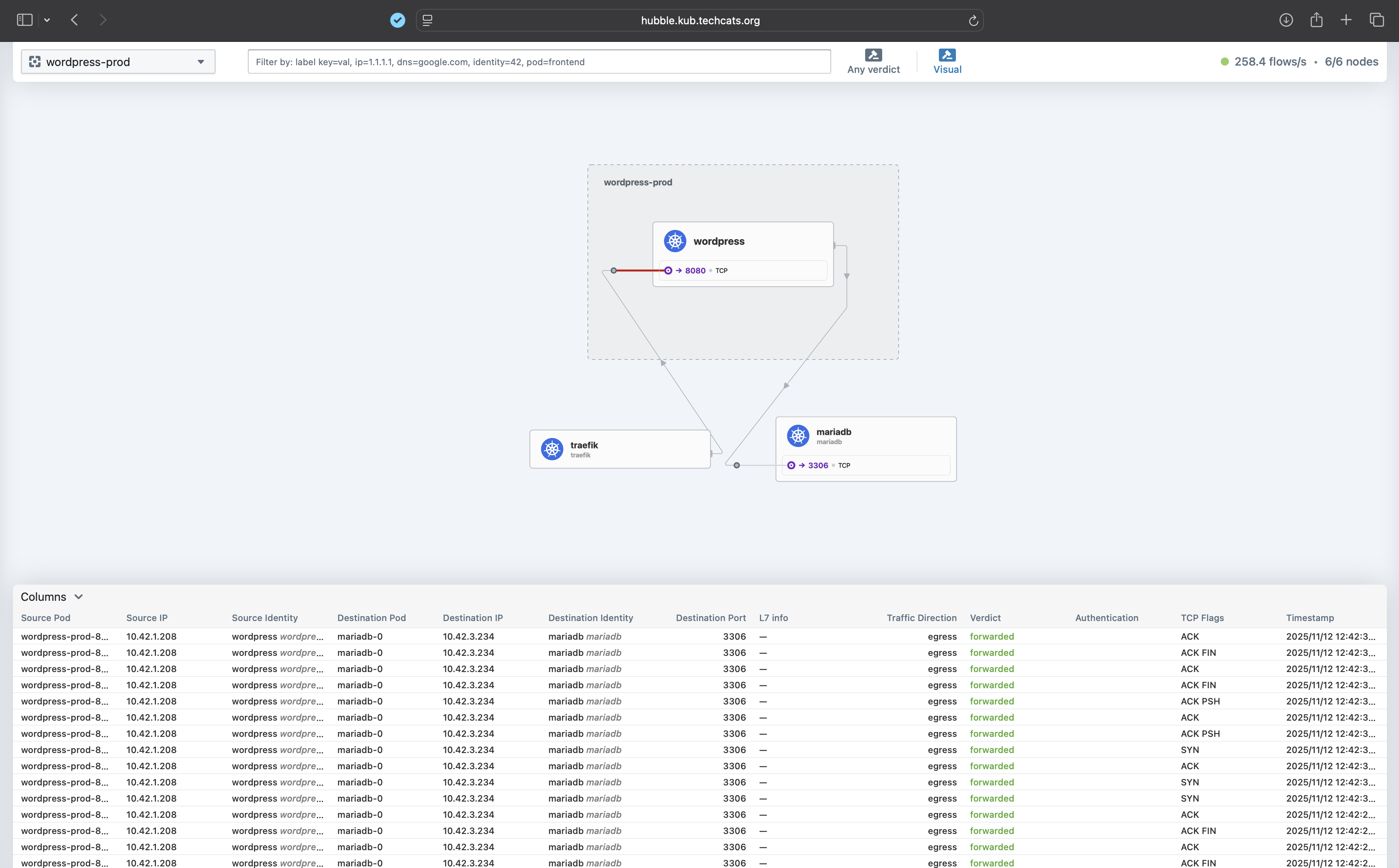

Once applied, the packets are successfully forwarded, as seen below:

At this stage, the WordPress frontend remains inaccessible because traffic from Traefik to WordPress is still blocked.

To restore frontend access, a final CiliumNetworkPolicy must be created to allow Traefik ingress to WordPress.

apiVersion: cilium.io/v2

kind: CiliumNetworkPolicy

metadata:

name: allow-traefik-to-wordpress

namespace: wordpress-prod

spec:

endpointSelector:

matchLabels:

app.kubernetes.io/name: wordpress

app.kubernetes.io/instance: wordpress-prod

ingress:

- fromEndpoints:

- matchLabels:

app.kubernetes.io/name: traefik

app.kubernetes.io/instance: traefik-traefik

io.kubernetes.pod.namespace: traefik

toPorts:

- ports:

- port: "8080"

protocol: TCP

- port: "8443"

protocol: TCP

Once applied, Hubble will display flow behavior similar to the first figure, showing successful communication across all components.

Key Takeaways#

- Cilium replaces kube-proxy, enabling identity-aware networking and load balancing without iptables.

- Hubble provides real-time observability of traffic flows, making it easy to validate and troubleshoot network policies.

- Start with a default-deny approach and allow only required communication (DNS, database, frontend) for a zero-trust model.

- All manifests are GitOps-managed through Argo CD, ensuring consistent, version-controlled deployment of Cilium resources and policies.

- Local-only exposure via Pi-hole DNS keeps internal dashboards like Hubble secure and isolated from the public internet.

References#

Cilium Documentation — Installation using Helm

Official Cilium guide for installing Cilium on Kubernetes using Helm, including configuration options and upgrade instructions.Isovalent Blog — Cilium Hubble Cheat Sheet: Kubernetes Network Observability in a Nutshell

Overview of Hubble’s observability features for Cilium, including practical CLI commands and troubleshooting workflows.Cilium Documentation — Network Policy

Explanation of Cilium’s Layer 3–7 network policies, including examples for traffic control, identity-based rules, and microsegmentation.