Overview#

This project provisions a complete Amazon EKS cluster using Terraform, including a VPC, subnets, NAT gateway, EKS control plane, and managed node group.

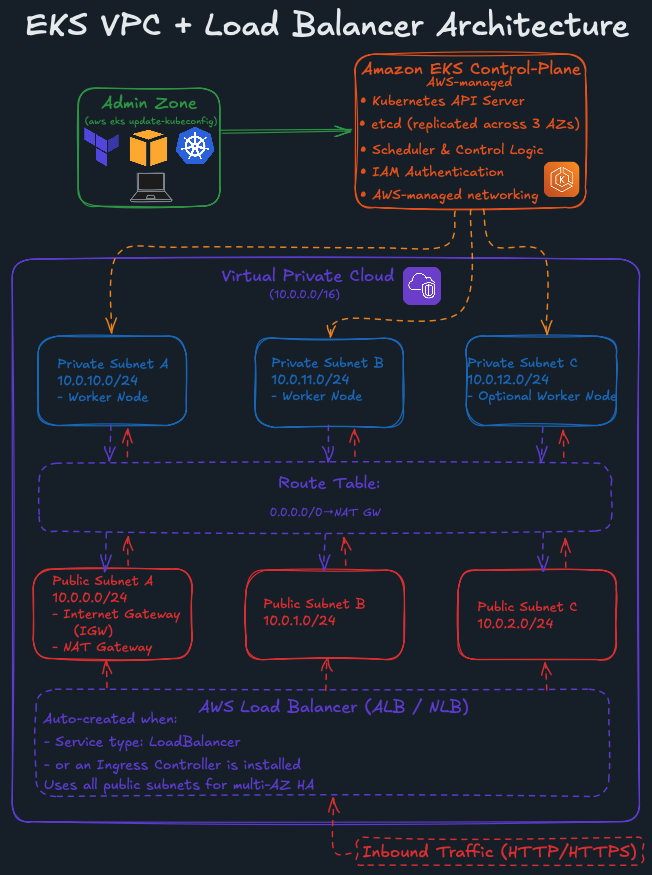

Before reviewing the Terraform configuration, the diagram below provides a high-level view of the Amazon EKS architecture, including the VPC, public/private subnets, NAT Gateway, worker nodes, and the flow through Kubernetes Service and Ingress resources.

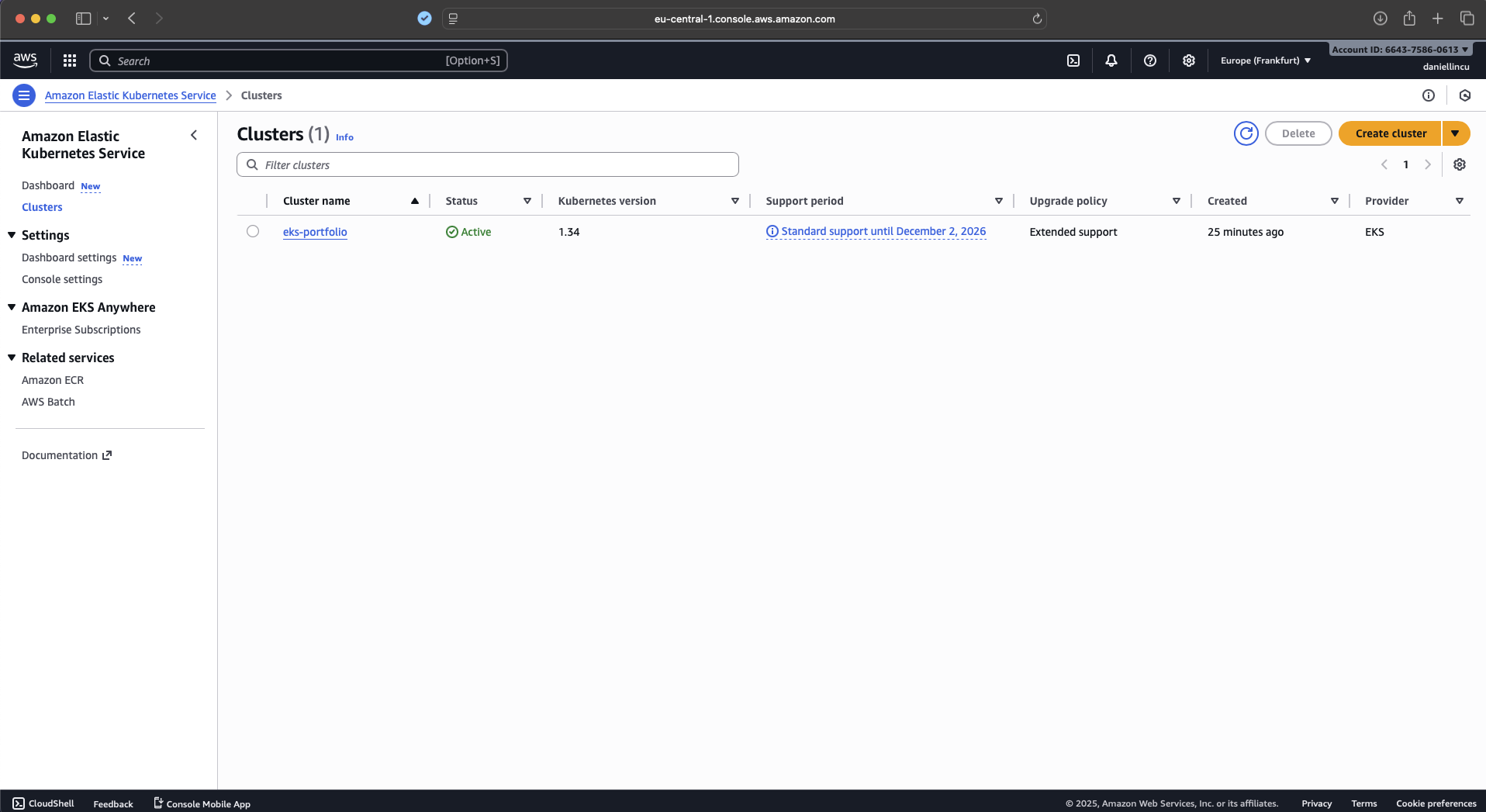

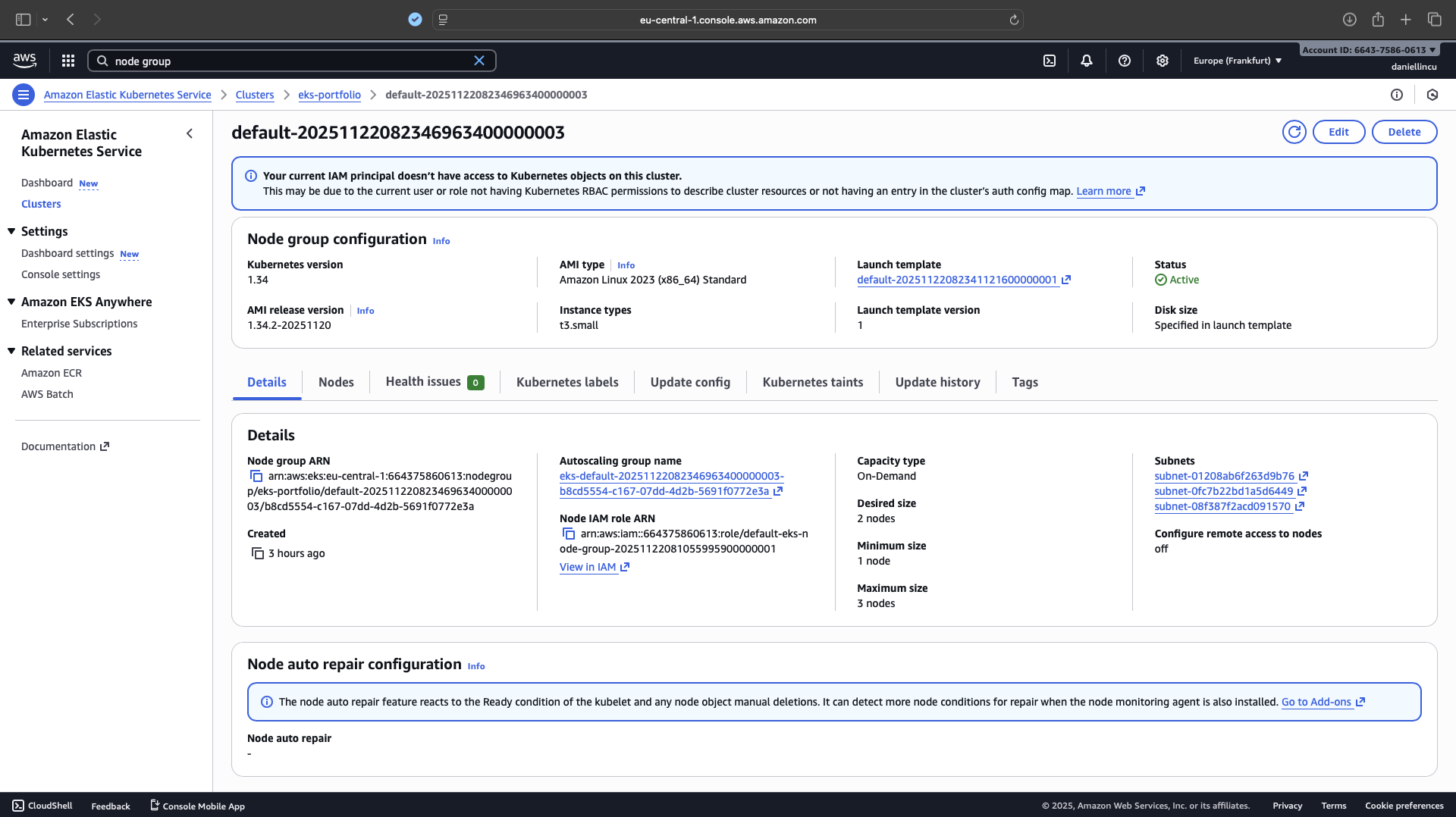

Service objects and Ingress controllers.The following screenshot shows the Amazon EKS cluster created after applying the Terraform configuration.

eks-portfolio in Active state, running Kubernetes version 1.34. This confirms the successful creation of the EKS control plane using Terraform.Prerequisites#

Before deploying the EKS cluster with Terraform, the following tools and environment were prepared:

- AWS account with an IAM user capable of creating EKS, EC2, VPC, IAM, and networking resources.

- AWS CLI v2 installed and configured using

aws configure. - Terraform 1.6+ installed locally.

- kubectl installed for interacting with the Kubernetes API server.

- A working directory containing all Terraform configuration files:

main.tf,variables.tf,providers.tf,locals.tf,outputs.tf,versions.tf, andterraform.tfvars.

These prerequisites ensure that Terraform can authenticate to AWS, provision the required infrastructure, and configure local access to the EKS cluster using kubectl.

Folder Structure#

eks-terraform/

├── locals.tf

├── main.tf

├── outputs.tf

├── variables.tf

├── providers.tf

├── versions.tf

└── terraform.tfvars

Files#

Each Terraform file is modular and handles a specific part of the infrastructure.

locals.tf#

This file defines a reusable tags map applied across all AWS resources for consistent labeling.

locals {

tags = {

Project = var.project

Environment = "dev"

ManagedBy = "Terraform"

}

}

main.tf#

This file builds the VPC and subnets using the official AWS VPC module, and then provisions the EKS cluster and node group using the AWS EKS module.

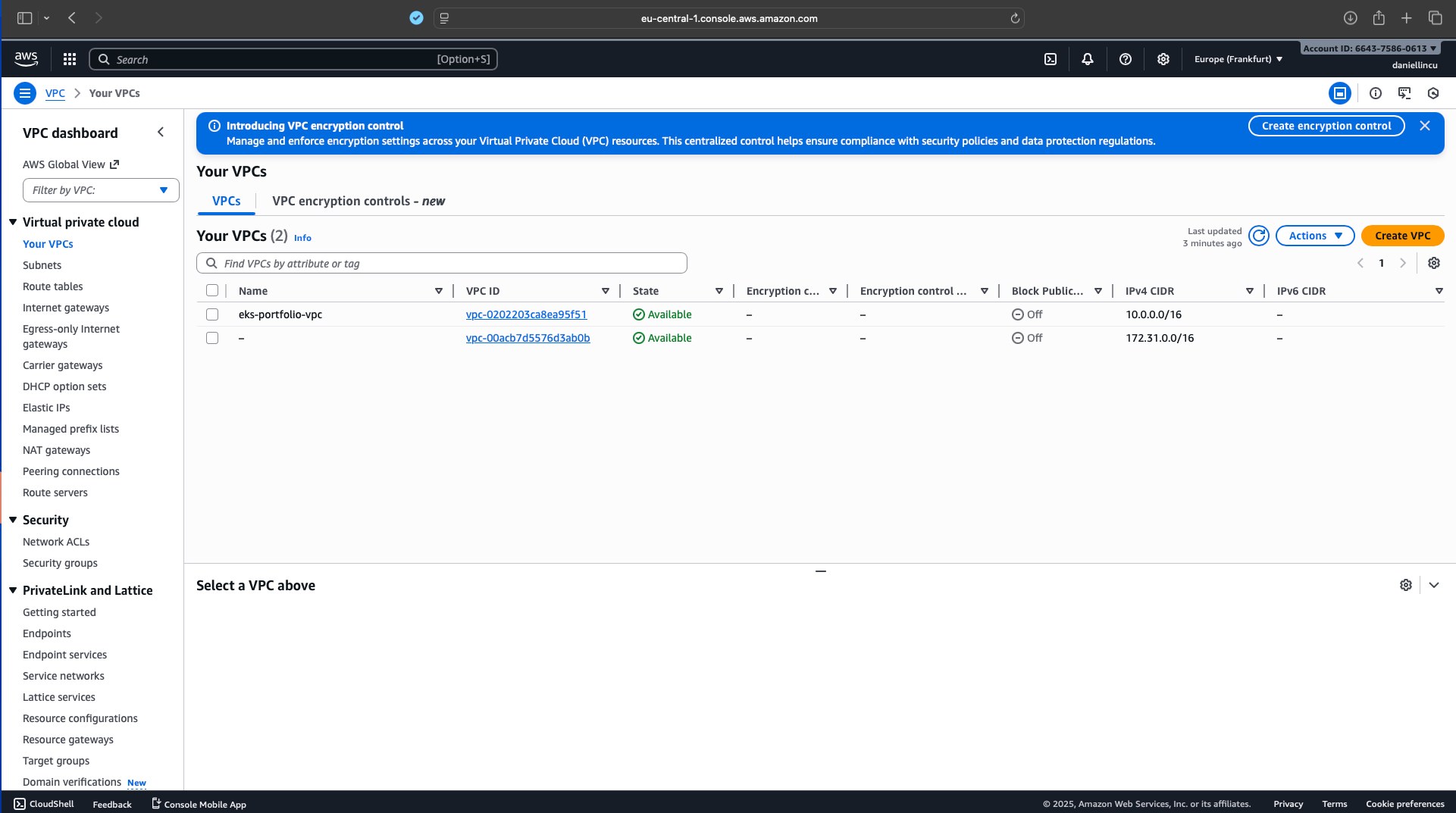

eks-portfolio-vpc) with CIDR block 10.0.0.0/16. This confirms that the VPC module successfully created the core networking layer required by the EKS cluster.# ============================================================

# VPC

# ============================================================

module "vpc" {

source = "terraform-aws-modules/vpc/aws"

version = "~> 5.0"

name = "${var.project}-vpc"

cidr = var.vpc_cidr

azs = var.azs

public_subnets = var.public_subnets

private_subnets = var.private_subnets

enable_nat_gateway = var.enable_nat_gateway

single_nat_gateway = true

enable_dns_hostnames = true

enable_dns_support = true

tags = local.tags

}

# ============================================================

# EKS Cluster

# ============================================================

module "eks" {

source = "terraform-aws-modules/eks/aws"

version = "~> 20.0"

cluster_name = var.cluster_name

cluster_version = "1.34"

cluster_endpoint_public_access = true

vpc_id = module.vpc.vpc_id

subnet_ids = module.vpc.private_subnets

control_plane_subnet_ids = module.vpc.private_subnets

eks_managed_node_groups = {

default = {

instance_types = var.node_instance_types

desired_size = var.node_desired_capacity

min_size = var.node_min_size

max_size = var.node_max_size

}

}

enable_cluster_creator_admin_permissions = true

tags = local.tags

}

t3.small and autoscaling settings (desired 2, min 1, max 3).outputs.tf#

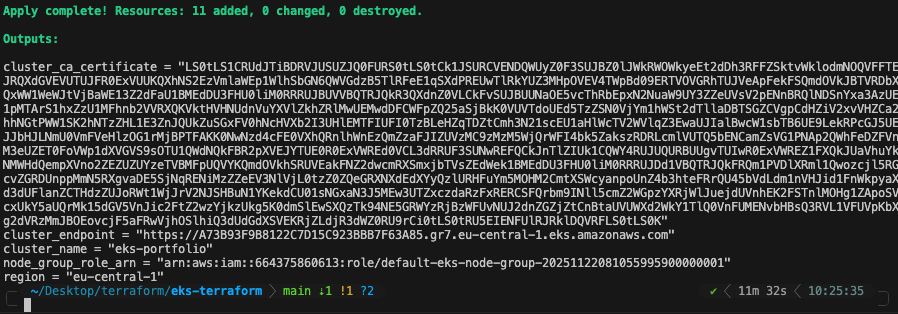

This file exposes essential cluster information—endpoint, CA certificate, region, and IAM role—for connecting kubectl and referencing resources externally.

output "cluster_name" {

description = "EKS cluster name."

value = module.eks.cluster_name

}

output "cluster_endpoint" {

description = "EKS API server endpoint."

value = module.eks.cluster_endpoint

}

output "cluster_ca_certificate" {

description = "Base64 encoded certificate authority."

value = module.eks.cluster_certificate_authority_data

}

output "region" {

description = "AWS region."

value = var.aws_region

}

output "node_group_role_arn" {

description = "IAM role ARN of the node group."

value = module.eks.eks_managed_node_groups["default"].iam_role_arn

}

providers.tf#

This file configures the AWS provider and sets the region where all infrastructure will be deployed.

provider "aws" {

region = var.aws_region

}

terraform.tfvars#

This file specifies the actual values used when applying the configuration, such as the region, project name, cluster name, node sizes, and NAT gateway settings.

aws_region = "eu-central-1"

project = "eks-portfolio"

cluster_name = "eks-portfolio"

node_instance_types = ["t3.small"]

node_desired_capacity = 2

node_min_size = 1

node_max_size = 3

enable_nat_gateway = true

variables.tf#

This file defines all configurable inputs for the deployment, including VPC CIDRs, subnets, AZs, instance types, scaling limits, and cluster parameters.

variable "aws_region" {

description = "AWS region where EKS will be created."

type = string

default = "eu-central-1" # Frankfurt – change if you want

}

variable "project" {

description = "Short name of the project used for tagging."

type = string

default = "eks-portfolio"

}

variable "cluster_name" {

description = "EKS cluster name."

type = string

default = "eks-portfolio"

}

variable "vpc_cidr" {

description = "CIDR block for the VPC."

type = string

default = "10.0.0.0/16"

}

variable "azs" {

description = "Availability zones to use."

type = list(string)

default = [

"eu-central-1a",

"eu-central-1b",

"eu-central-1c",

]

}

variable "public_subnets" {

description = "Public subnet CIDRs."

type = list(string)

default = [

"10.0.0.0/24",

"10.0.1.0/24",

"10.0.2.0/24",

]

}

variable "private_subnets" {

description = "Private subnet CIDRs."

type = list(string)

default = [

"10.0.10.0/24",

"10.0.11.0/24",

"10.0.12.0/24",

]

}

variable "node_instance_types" {

description = "Instance types for EKS managed node group."

type = list(string)

default = ["t3.small"]

}

variable "node_desired_capacity" {

description = "Desired number of worker nodes."

type = number

default = 2

}

variable "node_min_size" {

description = "Minimum number of worker nodes."

type = number

default = 1

}

variable "node_max_size" {

description = "Maximum number of worker nodes."

type = number

default = 3

}

variable "enable_nat_gateway" {

description = "Whether to enable NAT Gateway (costs money!)."

type = bool

default = true

}

versions.tf#

This file declares the required Terraform version and pins the AWS provider version for consistent builds.

terraform {

required_version = ">= 1.6"

required_providers {

aws = {

source = "hashicorp/aws"

version = "~> 5.0"

}

}

}

Deployment Process#

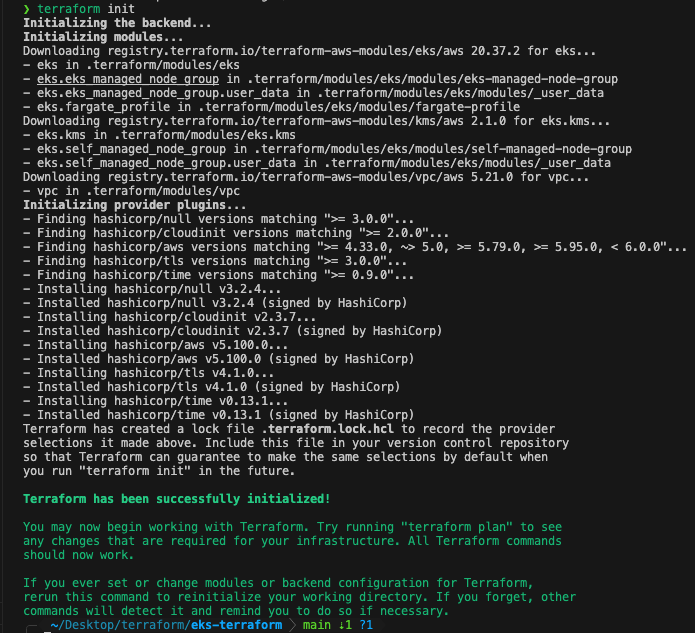

1. Initialize Terraform#

Terraform is initialized using terraform init, which downloads the AWS provider and all required Terraform modules.

terraform init

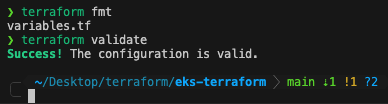

2. Validate the configuration#

terraform fmt

terraform validate

terraform fmt and terraform validate to ensure formatting and configuration correctness.3. Review the infrastructure plan#

terraform plan -no-color > plan.txt

This step confirms exactly which AWS resources were going to be created.

4. Create the EKS infrastructure#

terraform apply -auto-approve

Terraform deployed:

- VPC + subnets

- NAT Gateway

- Route tables

- EKS control plane

- EKS managed node group

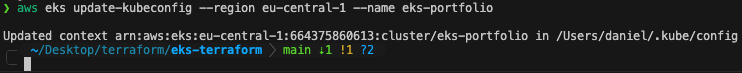

5. Configure kubectl for the new cluster#

aws eks update-kubeconfig --region eu-central-1 --name eks-portfolio

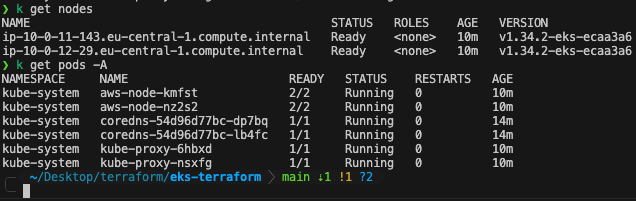

kubectl access to the EKS cluster.6. Verify cluster health#

kubectl get nodes

kubectl get pods -A

Both worker nodes appeared as Ready, and all system pods (CoreDNS, AWS CNI, kube-proxy) were running correctly.

Destroying the EKS Infrastructure with Terraform#

This section describes how the entire EKS cluster and all related AWS resources are safely destroyed using terraform destroy. The goal is to ensure clean removal without leaving orphaned infrastructure or unexpected AWS costs.

1. Review the Resources Scheduled for Destruction#

Before resources are removed, the destroy plan is previewed to verify exactly what terraform destroy will delete:

terraform plan -destroy -no-color > plan-destroy.txt

This lists all resources that will be removed, including:

- EKS control plane

- EKS managed node group

- VPC

- Public and private subnets

- NAT Gateway

- Route tables and associations

- Security groups and IAM roles

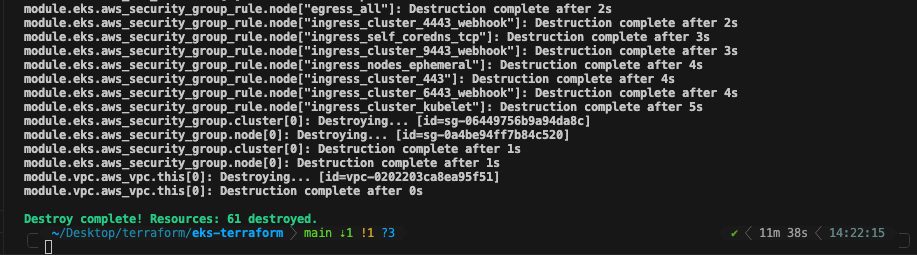

2. Destroy the Entire EKS Environment#

After confirming the destroy plan, I execute the full teardown:

terraform destroy -auto-approve

Terraform processes the removal in the correct dependency order:

- Worker nodes are terminated

- Managed node group is deleted

- EKS control plane is removed

- Network infrastructure (subnets, route tables, NAT gateway) is destroyed

- The VPC is removed last

This ensures no resource conflicts and avoids leaving behind billable components.

61.3. Verify That All Resources Are Removed#

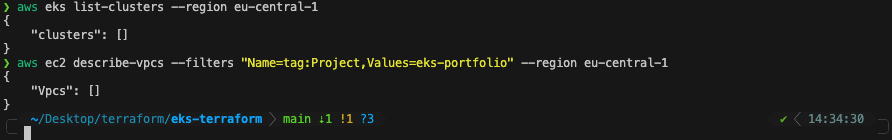

After the destroy finishes, I verify that nothing remains:

aws eks list-clusters --region eu-central-1

Then I check the VPC and network components:

aws ec2 describe-vpcs --filters "Name=tag:Project,Values=eks-portfolio" --region eu-central-1

At this point, both commands return no results, confirming that all EKS and VPC resources have been successfully removed.

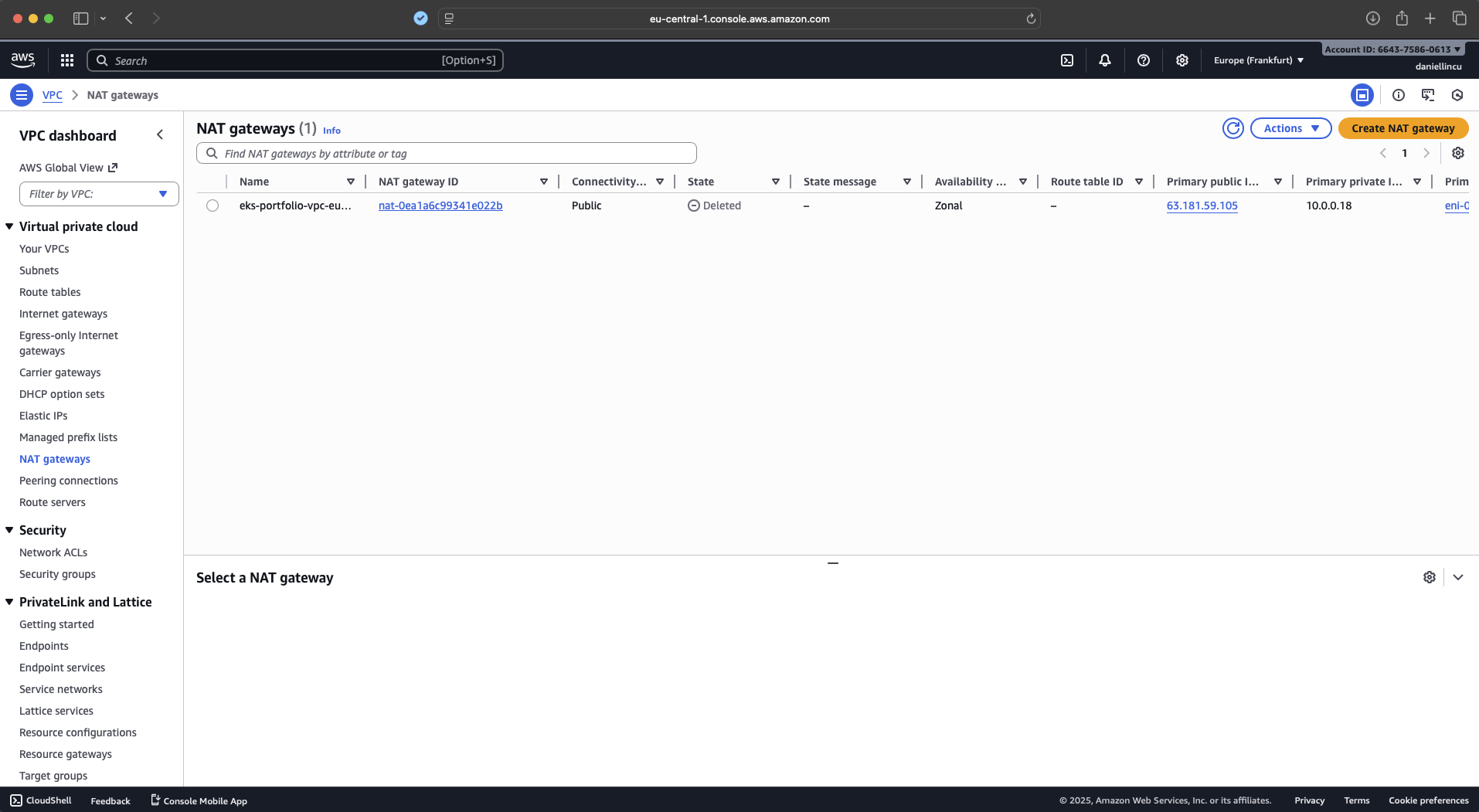

aws eks list-clusters returns no clusters, and aws ec2 describe-vpcs shows no VPCs tagged with Project=eks-portfolio, confirming a clean Terraform destroy.Because NAT Gateways are one of the most expensive components in an EKS setup, a saparate validation confirms that Terraform destroyed it successfully.

terraform destroy, the NAT Gateway created for the EKS VPC shows a Deleted state in AWS Console. This confirms that Terraform removed the networking resources cleanly, avoiding unnecessary cloud costs.Key Takeaways#

- This project demonstrates the complete lifecycle of provisioning and destroying an EKS cluster using Terraform and official AWS modules.

- A modular IaC structure is used, separating the VPC, subnets, NAT gateway, EKS control plane, and managed node groups.

- The setup validates key concepts in AWS networking (public/private subnets, NAT gateway, route tables) and how EKS integrates with VPC resources.

- The cluster bootstrap workflow is automated using

terraform init,terraform plan,terraform apply,terraform destroy, andaws eks update-kubeconfig. - Cluster health is verified using

kubectl, and the teardown confirms clean removal of the NAT gateway to avoid unnecessary cloud costs. - The project highlights practical, job-ready experience with Terraform, AWS, EKS, IAM, and Kubernetes fundamentals.

References#

Terraform – Install CLI

https://developer.hashicorp.com/terraform/tutorials/aws-get-started/install-cliAWS CLI – Installation Guide

https://docs.aws.amazon.com/cli/latest/userguide/getting-started-install.htmlkubectl – Installation Documentation

https://kubernetes.io/docs/tasks/tools/Terraform – AWS Getting Started Tutorials

https://developer.hashicorp.com/terraform/tutorials/aws-get-started